Editor’s Note: The complete testimony is available as a pdf here.

From: Mercatus Center/George Mason University

Testimony before the Joint Economic Committee

Jerry Ellig

Good morning Chairman Brady, Vice Chairman Klobuchar, and members of the committee. Thank you for inviting me to testify today.

I am an economist and research fellow at the Mercatus Center, a 501(c)(3) research, educational, and outreach organization affiliated with George Mason University in Arlington, Virginia. I’ve previously served as a senior economist for this committee and as deputy director of the Office of Policy Planning at the Federal Trade Commission. My principal research for the last 25 years has focused on the regulatory process, government performance, and the effects of government regulation. For these reasons, I’m delighted to testify on today’s topic.

For more than three decades, presidents of both political parties have instructed executive branch agencies to conduct Regulatory Impact Analysis when issuing significant regulations. Some independent agencies, such as the Securities and Exchange Commission, are required by law to assess the economic effects of their regulations. Executive orders and laws requiring economic analysis of regulations reflect a bipartisan consensus that economic analysis should inform, but not dictate, regulatory decisions.

Unfortunately, agencies’ Regulatory Impact Analyses are not nearly as informative as they ought to be, and there is often scant evidence that agencies utilized the analysis in decision making. These problems have persisted through multiple administrations of both political parties. The problem is institutional, not partisan or personal. Further improvement in the quality and use of Regulatory Impact Analysis will likely occur only as a result of legislative reform of the regulatory process. To achieve improvement, all agencies should be required to conduct thorough and objective Regulatory Impact Analysis for major regulations and to explain how the results of the analysis informed their decisions.

Let me elaborate on each of these points.

WHY REGULATORY IMPACT ANALYSIS IS NECESSARY

We expect federal regulation to accomplish a lot of important things, such as protecting us from financial fraudsters, preventing workplace injuries, preserving clean air, and deterring terrorist attacks. And regulation also requires sacrifices; there is no free lunch. Depending on the regulation, consumers may pay more, workers may receive less, our retirement savings may grow more slowly due to reduced corporate profits, and we may have less privacy or less personal freedom. Regulatory Impact Analysis is the key tool that makes these tradeoffs more transparent to decision makers. Understanding the effects of regulation has to start with sound Regulatory Impact Analysis.

A thorough Regulatory Impact Analysis should do four things:

- assess the nature and significance of the problem the agency is trying to solve, so the agency knows whether there is a problem that could be solved through regulation and, if so, the agency can tailor a solution that will effectively solve the problem;

- identify a wide variety of alternative solutions;

- define the benefits the agency seeks to achieve in terms of ultimate outcomes that affect citizens’ quality of life and assess each alternative’s ability to achieve those outcomes;

- identify the good things that regulated entities, consumers, and other stakeholders must sacrifice in order to achieve the desired outcomes under each alternative. In economics jargon, these sacrifices are known as “costs,” but just like benefits, costs may involve far more than monetary expenditures.

Without all of this information, regulation becomes a faith-based initiative. That is, where regulators have discretion under the law, they would be making choices based merely on the hope that good intentions will produce good results. Given the enormous influence regulation has on our day-to-day lives, decision makers have a responsibility to act based on knowledge of regulation’s likely effects, not just good intentions.

SHORTCOMINGS IN THE QUALITY AND USE OF REGULATORY IMPACT ANALYSIS

Scholarly research demonstrates that Regulatory Impact Analysis often falls short of the standards articulated in executive orders and Office of Management and Budget guidance. More often than not, agencies do not appear to use Regulatory Impact Analysis to inform major decisions. Regulatory Impact Analyses often seem to be advocacy documents written to justify decisions that were already made, rather than information that helped regulators figure out what to do.[1]

The Mercatus Center’s Regulatory Report Card provides some of the most recent evidence on the quality and use of Regulatory Impact Analysis.[2] The Regulatory Report Card is a qualitative evaluation of both the quality and use of regulatory analysis in federal agencies. The scoring process uses 12 criteria grouped into three categories:

1. Openness: how easily can a reasonably informed, interested citizen find the analysis, understand it, and verify the underlying assumptions and data?

2. Analysis: how well does the analysis define and measure the outcomes or benefits the regulation seeks to accomplish, define the systemic problem the regulation seeks to solve, identify and assess alternatives, and evaluate costs and benefits?

3. Use: how much did the analysis affect decisions in the proposed rule, and what provisions did the agency make for tracking the rule’s effectiveness in the future?

For each criterion, trained evaluators assigned a score ranging from 0 (no useful content) to 5 (comprehensive analysis with potential best practices).[3] Thus, each analysis has the opportunity to earn between 0 and 60 points.

The Report Card assesses how well a Notice of Proposed Rulemaking and the accompanying Regulatory Impact Analysis complies with key principles in Executive Order 12866, which governs regulatory analysis and review.[4] The scores do not assess whether the evaluators agree with the results of the analysis or believe the regulation is a good idea.

The online Report Card database now includes evaluations of every economically significant, prescriptive regulation proposed between 2008 and 2012—a total of 108 regulations.[5] Figure 1 shows average scores for each of the three categories of criteria. In each category, the average falls far short of the maximum possible score of 20 points.

Table 1 shows the average scores on each of the 12 criteria, plus the average total score. The average total score was just 31.2 out of 60 possible points—barely 50 percent. The highest total score ever achieved was 48 out of 60 possible points (80 percent), equivalent to a B-. This was the joint Environmental Protection Agency/National Highway Traffic Safety Administration regulation revising Corporate Average Fuel Economy standards proposed in 2009.

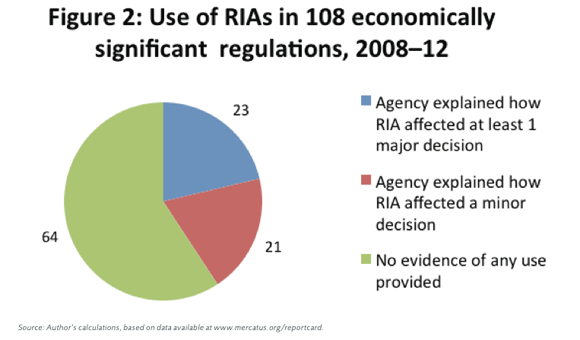

Table 1 shows that most of the lowest scores are for criteria measuring the use of analysis. The broadest Report Card criterion measuring use of analysis (Criterion 9) asks whether the agency claimed or appeared to use any part of the analysis to guide any decisions. As Figure 2 demonstrates, agencies often fail to provide any significant evidence that any part of the Regulatory Impact Analysis helped inform their decisions. Perhaps the analysis affects decisions more frequently than these statistics suggest, but agencies fail to document this in the Notice of Proposed Rulemaking or the Regulatory Impact Analysis. If so, then at a minimum there is a significant transparency problem.

For each Report Card criterion, we have found a few examples of reasonably good quality or use of analysis. These are documented in past testimony and in a series of short Mercatus on Policy publications.[6] But best practices are not widespread.

Unfortunately, these less-than-stellar Report Card results are consistent with prior published research on Regulatory Impact Analysis. Case studies document instances in which Regulatory Impact Analysis helped improve regulatory decisions by providing additional options regulators could consider or unearthing new information about benefits or costs of particular modifications to the regulation.[7] But Government Accountability Office studies and scholarly research reveal that in many cases, Regulatory Impact Analyses are not sufficiently complete to serve as a guide to agency decisions. The quality of analysis varies widely, and even the most elaborate analyses still have problems.[8] Surveying the scholarly evidence as of 2008, Robert Hahn and Paul Tetlock concluded that economic analysis has not had much impact, and the general quality of Regulatory Impact Analysis is low.[9] The Mercatus Center’s Regulatory Report Card suggests that matters have not improved since then.

IMPROVEMENT IN THE QUALITY AND USE OF REGULATORY IMPACT ANALYSIS REQUIRES REFORM

OF THE REGULATORY PROCESS

The problems identified by the Report Card occurred under both President Bush and President Obama. An econometric analysis that controls for other factors affecting the quality and use of analysis finds that there is no statistically significant difference in Report Card scores between the Bush and Obama administrations, although Bush administration regulations that cleared OIRA review after June 1, 2008 tended to have lower Report Card scores.[10] Previous research by other scholars also finds little variation in the quality of Regulatory Impact Analysis across administrations of different parties.[11] Another consistent—but disturbing—pattern is that administrations of both parties appear to require less thorough analysis from agencies that are more central to the administration’s policy priorities. The Bush administration, for example, permitted the Department of Homeland Security to proceed with a number of regulations that were accompanied by very incomplete Regulatory Impact Analysis; the Obama administration did likewise with the first major regulations implementing the Patient Protection and Affordable Care Act.[12] This same pattern appears to occur with other agencies.[13]

The persistence of mediocre Regulatory Impact Analysis across administrations is an institutional problem, not a personal or partisan problem. Deficiencies in the quality and use of Regulatory Impact Analysis transcend individuals and administrations. Substantial impetus for improvement in the quality and use of Regulatory Impact Analysis will require changes in the regulatory process.

Because current law rarely requires comprehensive economic analysis to inform regulatory decisions, agencies often treat Regulatory Impact Analysis as a paperwork exercise necessary to clear a regulation through OIRA, rather than a tool to aid decision making. Instead, they focus on other factors that are viewed as more central to ensuring that a regulation gets upheld in court. In the absence of a requirement for Regulatory Impact Analysis, these factors may actually hamper agencies from considering the pros and cons of a wide variety of alternatives. For example, agencies may tend to follow past precedent in designing new regulations because current regulatory approaches have already been defended and upheld in court. As a result, one agency economist noted, “We do what we always do, just trotting out the same old thing. That’s why we don’t come up with better regulations; we just come up with the same regulations in different areas.”[14]

When evaluating regulations for the Regulatory Report Card, we have found that when Congress requires agencies to consider specific factors such as costs or efficiency, they usually do so. Agencies pay attention to what the law says they should do, because otherwise a court might vacate the regulation. To improve the quality and use of Regulatory Impact Analysis, therefore, Congress could require federal agencies to conduct thorough Regulatory Impact Analysis before they write and propose significant regulations. The most obvious method would be a legislative requirement for Regulatory Impact Analysis coupled with judicial review. To enforce the law, judges need not engage in benefit-cost balancing or second-guess agency expertise. They would merely need to check that the agency’s analysis covered the topics specified in the law (such as analysis of the systemic problem, development of alternatives, and assessment of benefits and costs of alternatives), ensure that the analysis included the quality of evidence required by the legislation, and ensure that the agency explained how the results of the analysis affected its decisions.

Independent agencies are not currently subject to the executive orders on regulatory analysis and review. Some, such as the Securities and Exchange Commission, are required by law to conduct economic analysis when determining whether their regulations are in the public interest. Others have no such requirement. Scholarly research has found that many independent agencies conduct even less thorough economic analysis than executive branch agencies.[15] Requiring independent agencies to conduct Regulatory Impact Analysis and explain how they used it in decisions would likely improve their quality and use of analysis. Many of the independent agencies, such as the Consumer Product Safety Commission, Federal Trade Commission, Federal Communications Commission, and Consumer Financial Protection Bureau, deal with similar kinds of financial risk, physical risk, and consumer protection questions that executive branch agencies address in their assigned spheres of competence, so I see no reason a Regulatory Impact Analysis requirement could not apply to them as well.

Thank you for your time, and I look forward to your questions.

Footnotes

1. Richard Williams. “The Influence of Regulatory Economists in Federal Health and Safety Agencies.” Mercatus Working Paper. Arlington, VA: Mercatus Center at George Mason University, July 2008, http://mercatus.org/sites/default/files/publication/WP0815_Regulatory%20 Economists.pdf; Wendy E. Wagner, “The CAIR RIA: Advocacy Dressed up as Policy Analysis,” in Reforming Regulatory Impact Analysis, ed. Winston Harrington et al. (Washington, DC: Resources for the Future, 2009), 57.

2. The Report Card methodology and 2008 scoring results are in Jerry Ellig and Patrick McLaughlin, “The Quality and Use of Regulatory Analysis in 2008,” Risk Analysis 32, no. 5 (May 2012): 855–80. Scores for all regulations evaluated in 2008 and subsequent years are available at www.mercatus.org/reportcard.

3. For the first several years, the evaluators were senior Mercatus Center regulatory scholars and graduate students trained in Regulatory Impact Analysis. Since 2010, we have developed a nationwide team of economics professors who serve as evaluators in conjunction with senior Merca- tus Center regulatory scholars. Biographical information on current evaluators is available at www.mercatus.org/reportcard.

4. Exec. Order No. 12866, 50 Fed. Reg. 190 (Sept. 30, 1993), 51735–44, http://www.whitehouse.gov/sites/default/files/omb/inforeg /eo12866/eo12866_10041993.pdf. President Obama reaffirmed Exec. Order No. 12866 in Exec. Order No. 13563, “Improving Regulation and Regulatory Review,” 76 Fed. Reg. 14 (Jan. 21, 2011), 3821–23, http://www.whitehouse.gov/sites/default/files/omb/inforeg/eo12866 /eo13563_01182011.pdf.

5. “Prescriptive” regulations are what most people think of when they think of regulations: they mandate or prohibit certain activities. This is dis- tinct from budget regulations, which implement federal spending programs or revenue collection measures. The Report Card evaluated budget regulations in 2008 and 2009, then discontinued evaluating budget regulations in subsequent years because it was clear the budget regulations have much lower-quality analysis. See Patrick A. McLaughlin and Jerry Ellig, “Does OIRA Review Improve the Quality of Regulatory Impact Ana- lysis? Evidence from the Bush II Administration,” Administrative Law Review 63 (2011): 179–202; Jerry Ellig and John Morrall., “Assessing the Quality of Regulatory Analysis.” (Working Paper No. 10-75, Mercatus Center at George Mason University, Arlington, VA, December 2010).

6. Jerry Ellig. “Look Before You Leap: Improving Pre-Proposal Regulatory Analysis,” Congressional testimony, March 29, 2011, before the Com- mittee on the Judiciary, Subcommittee on the Courts, Commercial, and Administrative Law, US House of Representatives; Jerry Ellig and James Broughel, “Regulation: What’s the Problem?,” Mercatus on Policy no. 100 (Nov. 2011); Jerry Ellig and James Broughel, “Regulatory Alternatives: Best and Worst Practices,” (Mercatus on Policy, Mercatus Center at George Mason University, Arlington, VA, Feb. 2012); Jerry Ellig and James Broughel, “Baselines: A Fundamental Element of Regulatory Impact Analysis,” (Mercatus on Policy, Mercatus Center at George Mason Univer- sity, Arlington, VA, June 2012).

7. Winston Harrington, Lisa Heinzerling, and Richard D. Morgenstern, eds., Reforming Regulatory Impact Analysis (Washington, DC: Resources for the Future, 2009); Richard D. Morgenstern, Economic Analyses at EPA: Assessing Regulatory Impact (Washington, DC: Resources for the Future, 1997); Thomas O. McGarity, Reinventing Rationality: The Role of Regulatory Analysis in the Federal Bureaucracy (New York: Cambridge University Press, 1991).

8. See Art Fraas and Randall Lutter, “The Challenges of Improving the Economic Analysis of Pending Regulations: The Experience of OMB Circular A-4,” Annual Review of Resource Economics 3 no. 1 (2011): 71–85; Jamie Belcore and Jerry Ellig, “Homeland Security and Regulatory Analysis: Are We Safe Yet?,” Rutgers Law Journal 40, no. 1 (2008): 1–96; Robert W. Hahn, Jason Burnett, Yee-Ho I. Chan, Elizabeth Mader, and Petrea Moyle, “Assessing Regulatory Impact Analyses: The Failure of Agencies to Comply with Executive Order 12,866.” Harvard Journal of Law and Public Policy 23, no. 3 (2001): 859–71; Robert W. Hahn, and Patrick Dudley, “How Well Does the Government Do Cost–Benefit Analysis?” Review of Environmental Economics and Policy 1, no. 2 (2007): 192–211; Robert W. Hahn, and Robert Litan, “Counting Regulatory Benefits and Costs: Lessons for the U.S. and Europe,” Journal of International Economic Law 8, no. 2 (2005): 473–508; Robert W. Hahn, Randall W. Lutter, and W. Kip Viscusi. Do Federal Regulations Reduce Mortality? Washington, DC: AEI-Brookings Joint Center for Regulatory Studies (2000);Government Accountability Office, Regulatory Reform: Agencies Could Improve Development, Documentation, and Clarity of Regulatory Economic Analyses, Report GAO/RCED-98-142 (May 1998); Government Accountability Office, Air Pollution: Information Contained in EPA’s Regulatory Impact Analyses Can Be Made Clearer, Report GAO/RCED 97-38 (April 1997).

9. Robert W. Hahn and Paul C. Tetlock, “Has Economic Analysis Improved Regulatory Decisions?,” Journal of Economic Perspectives 22, no. 1 (2008): 67–84.

10. See Jerry Ellig, Patrick A. McLaughlin, and John F. Morrall III. “Continuity, Change, and Priorities: The Quality and Use of Regulatory Analysis Across U.S. Administrations,” Regulation & Governance 7 (2013): 153–73; Jerry Ellig, “Midnight Regulation: Decisions in the Dark?,” (Mercatus on Policy, Mercatus Center at George Mason University, Arlington, VA, Aug. 2012). 11. Hahn and Dudley, “How Well Does the Government Do Cost-Benefit Analysis?”

12. Belcore and Ellig, “Homeland Security and Regulatory Analysis;” Christopher J. Conover and Jerry Ellig, “Beware the Rush to Presumption, Part C: A Public Choice Analysis of the Affordable Care Act’s Interim Final Rules” (Working Paper No. 12-03, Mercatus Center at George Mason University, Arlington, VA, Jan. 2012); Christopher J. Conover and Jerry Ellig, “Rushed Regulation Reform,” (Mercatus on Policy, Mercatus Center at George Mason University, Arlington, VA, Jan. 2012).

13. Ellig, McLaughlin, and Morrall, “Continuity, Change, and Priorities.”

14. Williams, “The Influence of Regulatory Economists,” 5.

15. Arthur Fraas and Randall L. Lutter, “On the Economic Analysis of Regulations at Independent Regulatory Commissions,” Administrative Law Review 63 (2011): 213–41.

Leave a Reply

You must be logged in to post a comment.